India Moves to Regulate AI Content: New Rules May Mandate Clear Labels on AI-Generated Media

The Indian government is preparing to introduce new regulations that would require all AI-generated content to carry visible labels or disclaimers. The move comes amid rising concerns over misinformation, deepfakes, and other risks associated with rapidly evolving generative AI technologies.

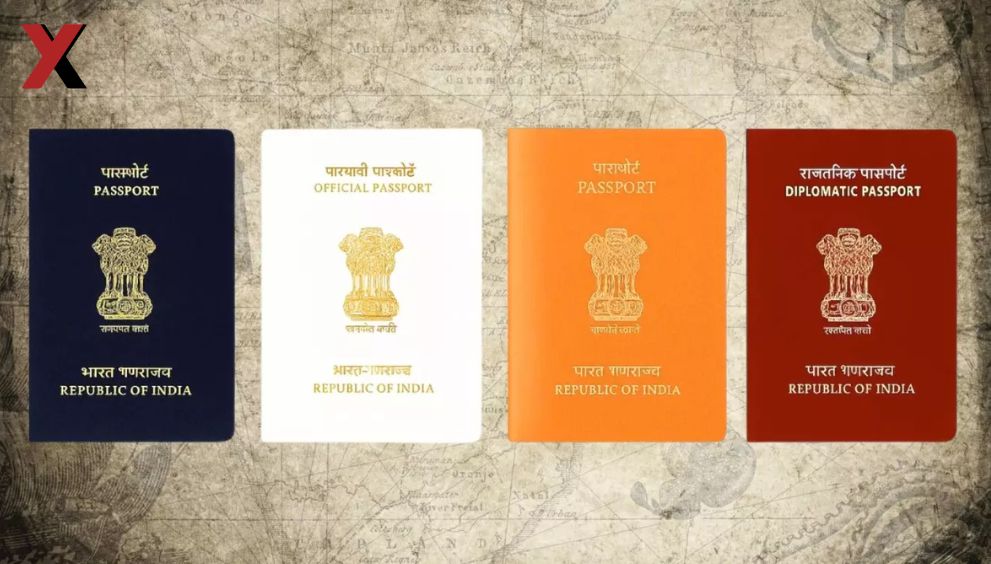

Officials from the Ministry of Electronics and Information Technology (MeitY) have said the proposed rules will apply to AI-created text, images, videos, and audio content, helping users distinguish between human-created and machine-generated media.

Curbing Misinformation and Deepfakes

The labeling initiative seeks to prevent AI tools from being used to spread false or misleading information. Deepfake videos and AI-generated posts have increasingly influenced public discourse and social media trends, creating challenges for both individuals and institutions in verifying authenticity.

By requiring clear labels, India aims to ensure that citizens are aware when content is generated or modified by AI, promoting digital literacy and informed decision-making online.

Balancing Innovation and Responsibility

India recognizes the benefits of AI in sectors like healthcare, education, and business. However, regulators emphasize that technological progress must be balanced with ethical responsibility. Clear labeling rules are expected to guide AI developers, digital platforms, and media organizations in adopting transparent practices without stifling innovation.

The government is reportedly seeking input from industry experts, tech companies, and civil society groups to ensure practical and effective implementation of the rules.

Global Context

India’s proposed regulations align with similar initiatives worldwide. Governments in the European Union, United States, and other regions have been exploring mandatory AI content disclosures to protect citizens from misinformation and enhance trust in digital media.

Experts believe India’s regulatory stance could serve as a benchmark for other countries grappling with the growing influence of generative AI on public information.

Implications for Platforms and Users

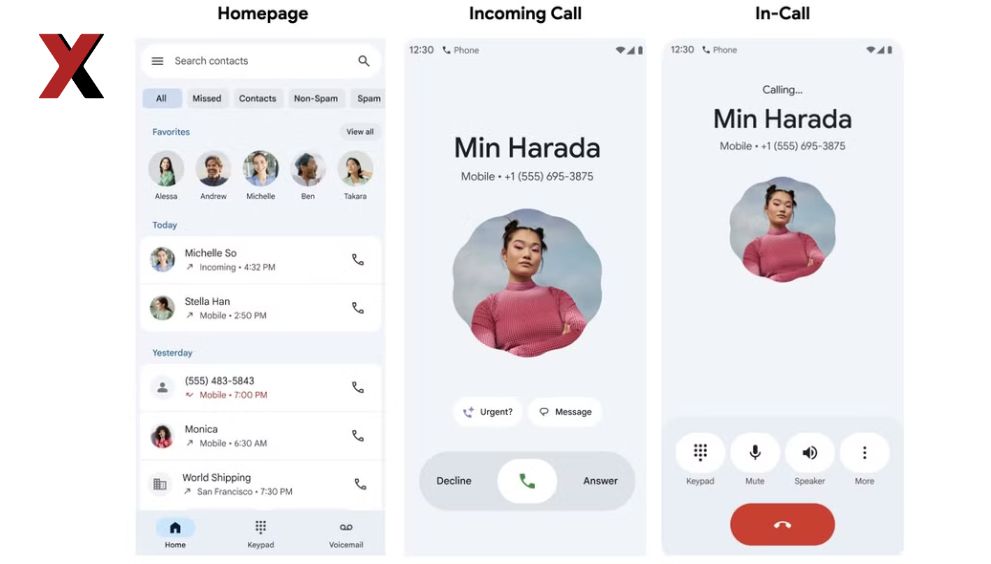

If implemented, social media platforms, news outlets, and AI content developers will need to integrate labeling mechanisms to comply with the new rules. Users may soon see tags such as “AI-generated content” or “created with artificial intelligence” on posts, videos, and other digital media.

Compliance may also require investment in AI detection and content moderation tools, ensuring that misleading or untagged AI content is identified and corrected promptly.

English

English